9 Common Misunderstandings About WebVR

Since WebVR is relatively new, and since some of you haven’t been reading the blog posts, here is a short primer designed to answer some common questions about web-based virtual reality.

- WebVR enables Virtual Reality support on common web browsers via a JavaScript API.

- The 1.0 version was just proposed, but is not yet finalized by W3C

- The API can’t just “3D” a web page. You need to use a JavaScript-based 3D library like BabylonJS or THREE.js to create the VR world, which in turns requires that the browser support the WebGL API.

- The WebVR API handles detecting VR displays (which are in the VR headset) and communicating with them about user ‘pose’ (head orientation or room-scale movement) and features of the Head-Mounted Display (HMD) like offset (spacing between the eyes) and field of view.

- The WebVR API also has a GamePad extension, for detecting and using input from gamepads.

- In VR mode, the 3D scene is split into two stereo views from slightly different positions, allowing our eyes to fuse the two images and do depth perception.

- The WebVR API also creates “sensor fusion” – meaning it reports things like headset orientation and position in a room so that the scene can be adjusted.

- Most browsers haven’t implemented WebVR yet, but both the Chrome and Firefox teams are rapidly progressing to a native code API by the end of 2016. The Samsung Mobile browser already supports native WebVR, which is great for Gear VR users.

1. Headset VR requires a separate, monster computer

A big point of confusion is where the VR “happens” in WebVR – it is quite different for a dedicated headset like Oculus compared to a smartphone like the Applie iPhone. The typical images you see don’t make this distinction.

The most sophisticated use of WebVR is for Headset or Head-Mounted Device (HMD) systems.

- In this mode, you have a high-end headset connected to a host computer by USB and HDMI cables.

- The host computer is responsible for getting and rendering the 3D scene, which is then relayed to the headset.

- The headset’s role is to show the scene, and relay position and orientation information back to the host computer.

In the case of WebVR, it is the web browser that is rendering the scene. The headset simply displays what the browser sends to it from the web browser window. Current implementation only send a single HTML5 <canvas> scene on the web page.

And to send that 3D out to the headset (even if you use a browser), you have to install drivers for the headset, just like other external devices attached to a computer. Once installed, compatible web browsers use the drivers to communicate with the headset.

Oculus, HTC Vive, and now OSVR headsets all supply the driver software you need to connect headsets. And yes, the drivers are device-specific – you can’t use an Oculus driver with the Vive, and vice-versa.

To render VR, you have to render fast. You’re rendering 2 stereo views instead of one scene, and the resolution sent to each eye has to be > 1200px for a feeling of “immersion” according to research by Facebook. This in turn dictates a host computer with a graphics card powerful enough to do “4K video”, or nearly that fast. Most computers today (even gaming rigs from a year ago), don’t have the juice. Instead, you need something like a “VR Ready” GTX 1070 or 1080, cards that cost several hundred dollars.

If you try using an older computer, it just won’t work. You’ve got to build a big rig, spending at least $1,500 US, like the ones described below:

- http://techbuyersguru.com/1500-vr-ready-mini-itx-pc-build

- https://www.amazon.com/Nightblade-i7-6700K-Windows-Graphics-Computer/dp/B01HP167I0/

You’ll also need to by the headset, between $500 – $800.

- HTC Vive (developer’s choice)

- Oculus Rift (widest market share)http://www.osvr.org/hardware/buy/

- OSVR (low-cost open-source alternative)

2. You can’t use a Mac for headset VR

Yes, let’s rub it in… The requirement for a cutting-edge computer is why there no Macs (not even the “trash can”) that can run headset-based VR. While Apple systems are used by graphic and web designers, they are optimized for big 2d scenes, not 3D. The result is that no Mac can run the headsets, even though everything down to a high-end PowerMac can render basic, non-stereo, 3D scenes.

While Apple has made noises about supporting VR, it will take some time to improve the graphics hardware. And the “appliance” nature of Macs means that it will be difficult to upgrade as VR quality progresses. So, unless you’re willing to build a “hackintosh” which a video card big enough to support VR, Macs aren’t the development platform you need for this work.

If you do this kind of build, you can use Mac OS, and hope that Apple someday upgrades its graphics (and Oculus its Mac graphic drivers) so it can compete in the virtual reality market.

3. You can’t build practical VR for the Web using Unity or Unreal Engine

LOTS of developers and startups are currently staking their hopes on using Unity3D, a great popular system for building games, especially those targeted at smartphones. So what’s the problem?

First, native Unity apps can’t be distributed on the web because browser manufacturers have stopped supporting “plugins”. This is exactly the same change that doomed Adobe Flash as a web authoring tool. Unity used to have a web browser plugin, but it won’t work in modern browsers and support was dropped..

Now, Unity and Unreal both support “transpiling” their native apps to HTML5 and JavaScript. The transpile works for simple worlds, but the enormous size of the resulting payload (tens to hundreds of megabytes) dwarfs the typical WebVR app (1-2 megabytes). Even with today’s broadband, you’ll be asking your web users to wait several minutes to use your VR world. And the more complex games and worlds that Unity and Unreal excel at will be even bigger.

Unity is the hands-down favorite for building native mobile apps, but on the web, compared to WebVR, it’s a second choice.

4. You need the latest browsers to work with “headset” WebVR

If you’re working with Oculus Rift, HTC Vive, or one of the new OSVR headsets which support WebVR you need to use a browser that natively supports the API. The current list of development or “experimental” browsers that use WebVR in September 2016 is:

- Chromium desktop

- Firefox Nightly desktop

- Samsung Internet Browser (with Gear VR enabled)

To get started, you need to download one of these builds. The current list is at:

If you’re working with the THREE.js 3D library, all is good. Unfortunately, at the time of writing the Firefox Nightly download doesn’t work with BabylonJS, so stick with Chromium if you are using that framework.

The important consequence of this is that, at least until mid-2017, you can’t expect consumers to have a WebVR compatible browser installed on their PCs.

5. You can still view and create VR using smartphones instead of headsets

All is not lost, if you have an iOS or Android phone. Both Android and iOS can handle low-end VR without headsets. In this case, the mode is different – the headset display the host rendering computer are essentially the same thing. Instead of a HMD, you plop your smartphone into a passive HMD without rendering. The smartphone is responsible for generating stereo views, distortion, and ultimate display.

Why can smartphones do this, when most desktops choke? The main reason is quality. Smartphones have smaller screens, which means they may be easier to update. So, the view through a smartphone won’t be as good, but is passable for some VR applications and for development.

6. Most smartphones require JavaScript polyfills to work

If you don’t have a high-end headset, you can still develop for WebVR in a “smartphone” mode. In smartphone mode, you load the VR scene on your smartphone, and plop it into a Google Cardboard-class headset. The headsets are extremely cheap, but do have connections which allow connecting up audio and buttons on the phone. So, with a late-model smartphone you can create and view VR, though the quality will be much lower than a dedicated headset.

It will take a long time for most consumers to get custom VR headsets, so smartphone-based VR is your “target device” unless you are creating WebVR apps for a niche professional market. They’re cheaper, don’t need a host computer, and there are no dangling USB or HDMI cables.

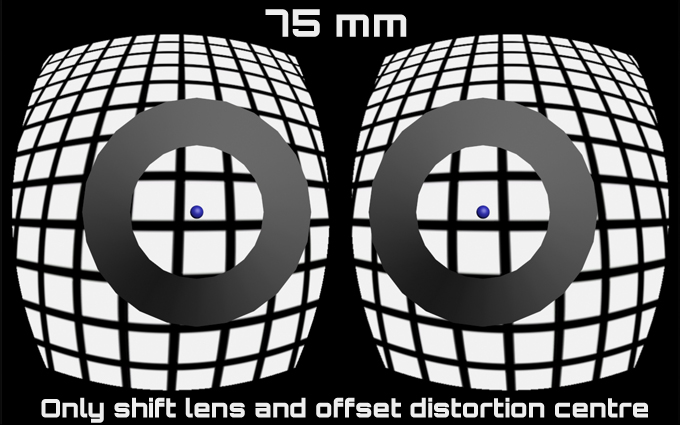

Smartphone WebVR does have some limitations, however. Unlike a HMD, smartphones don’t have a built-in way to “barrel distort” the scene to extend the field of view in the headset. The type of distortion that has to be applied is shown below.

The Rift and other high-end headsets automatically distort images so they are compatible with their lenses. Smartphone WebVR has to be distorted by JavaScript in the web browser, or the web browser itself.

Therefore, a smartphone solution requires that you use the webvr-polyfill library created by Boris Sumus. This polyfill implements the WebVR 1.0 API for Google Cardboard (read smartphone) class VR devices, and also does the “barrel distortion” needed to render scenes on smartphones. The distorted images are un-distorted into a wide FOV scene the Google Cardboard class headsets, which have lenses similar to a more sophisticated HMD.

It will also upgrade desktop browsers, most notably Microsoft Edge, so you can check your 3D while you develop on a desktop without constantly strapping and unstrapping a Cardboard test device.

BabylonJS defines VR cameras in its API that will do barrel distortion as well, so you can use them directly without webvr-polyfill.

However, it is important to note that the polyfill WILL NOT enable a high-end headset. You can’t use a polyfill with an old browser and plug in your Oculus. Headsets need to talk to a desktop browser directly in native code, so you still need the dev versions of Chromium or Firefox Nightly, and drivers for the headset.

7. Degree of movement in VR varies widely by platform

There are three possible levels of movement in VR, depending on your headset

- Head-tracking (“orientation”) only

- Orientation + standing up, sitting down

- Walking around a room (“room-scale”)

Google Cardboard class systems (and that includes Gear VR) only support orientation at present. So, you can only have an “armchair” experience with these devices.

In contrast, the HTC Vive supports full room-scale experiences. It includes two sensors that you mount at the ends of a small room to track your overall motions.

The Oculus Rift falls in the middle. It can detect you standing up, jumping, and other movement, but not full room-scale experiences.

8. Methods for interaction in VR vary widely by platform

There’s even more variation in support for interacting with the VR world beyond moving your head or body.

First, there is support for gamepad-style interaction. Many low-end Cardboard devices include a small gamepad-style controller, and higher-end headsets have dedicated buttons for interaction. The WebVR API exposes any gamepads which have declared their connection with the headset, and you can call it after calling navigator.getVRDisplays(); When you call navigator.getGamePads(), it returns the id of the associated display, which might be your HMD. However, this part of the spec is very new in Sept. 2016 and highly experimental.

For actual gestures and “picking” an object in VR the support is even more varied. Potentially, any device that detects the position of your body could be adapted for gesture detection. This includes systems like the Leap Motion controllers, as well as the controllers on the upcoming Oculus Touch. Integration of these devices under the WebVR API is still a ways off, though it is perfectly possible to write separate code to detect gestures as you use the WebVR API.

For now, the safest way for a WebVR app to support interaction is with “gaze detection“, which can be done with any VR framework. This means that when a user stares at an object long enough, it indicates that it has been “activated”. Longer yet, and it is “selected”. This kind of interaction is enough for simple VR displays (e.g. 360 video or room walkthroughs) which will probably form a large part of WebVR based apps in the near future.

9. You’ve got to follow the bleeding edge

Due to the provisional and rapidly evolving nature of WebVR, you won’t find standard tutorials or specs. It’s important that you join groups actively involved in creating and developing the spec. This way you can keep up with the latest trends, as well as ask and answer questions.

If you’re working with THREE.js, your best resource is the Slack Channel for WebVR.

If you’re working with BabylonJS, you’ll also need to check the HTML5GameDevs site for VR-related posts.